Data centres have always required some kind of cooling system. But as IT systems become more sophisticated their power consumption grows and so does their heat output. Too much heat can fry your CPUs and GPUs, and even create a fire risk, so cooling is an essential part of server hosting. At the same time, our awareness of our environmental responsibilities has also increased. We need to cool – but we need to do so efficiently to be eco-friendly. So where are we at now, how did we get there, and what should you be using?

In it for the CRAC

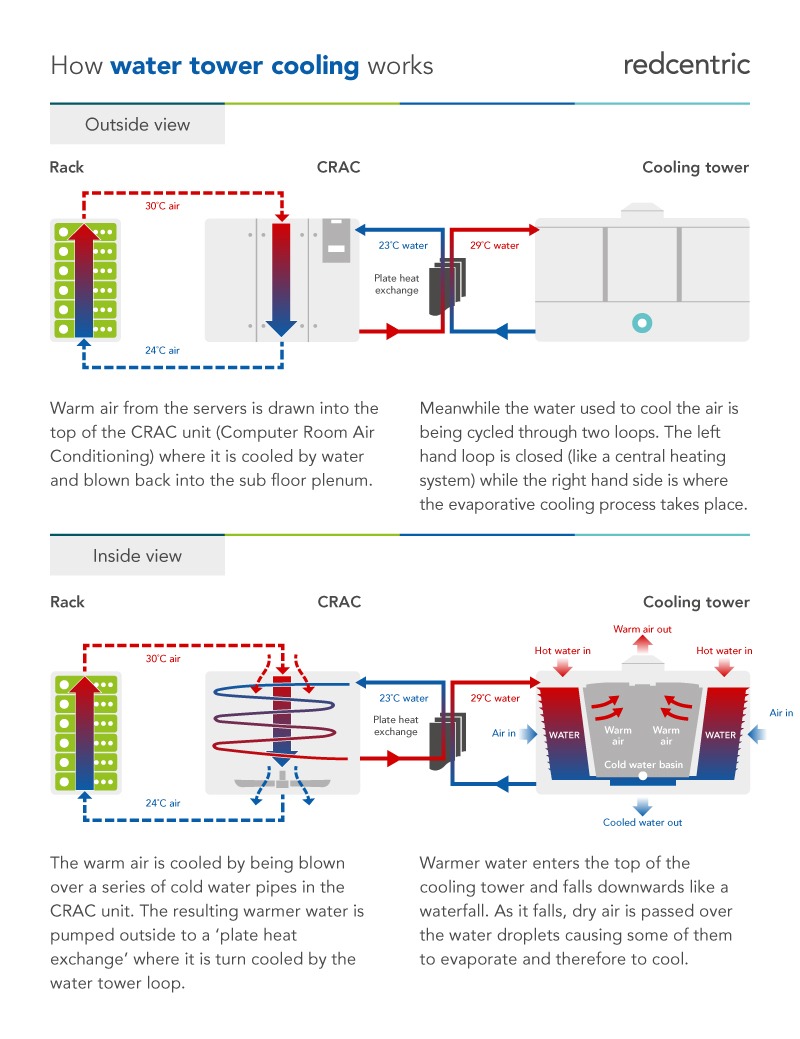

Computer room air conditioning (CRAC) is the standard method of keeping the data floor cool. A step beyond the traditional air conditioning units found in homes and offices (and data centres, once upon a time). This setup sends compressed air beneath the raised floor and then pushes it up through perforated tiles to cool the machines. This typically works well enough for low-density computing – where the power demand is not too great and thus the cooling requirement is also relatively low.

However, there are a couple of problems with this system:

- The CRAC and computer room air handling (CRAH) systems that cool the air can be incredibly energy-intensive – bad for the environment and your budget.

- Although the air near the floor is very cold, it quickly warms as it rises and mixes with the hot air coming from the servers, meaning the servers at the top of the racks are not getting the same cooling benefit.

These problems led to more effective methods of cooling being deployed in data centres.

Containment

The creation of hot and cold aisle configurations sought to solve the problem of recirculating air. Instead of mixing it all together, the data floor is divided into enclosed, cold rows and open, warm rows. The cool air is distributed to the front of the servers (facing into the enclosed cold aisles), while the hot air coming from the fans at the back of the servers spews out into the hot aisles. This allows the cold air to do its job before it’s warmed up and the system is more efficient.

But the CRAH and CRAC are still using a lot of power.

Saving energy with more precise cooling

Traditional cooling systems pushed cold air out using fans that operated at constant speeds. This not only resulted in constant energy use, it also meant that data centre operators didn’t have the precise control to adjust cooling to requirements. Modern technologies have allowed the use of variable speed fans, which allow more targeted, more reactive cooling.

In addition to operators controlling air flow, they can now place cooling systems within a rack directly next to the heat-producing equipment to quickly and efficiently draw off heat from high-density systems.

At Redcentric, we use rear door cooling for our HPC racks, which acts a bit like a reverse radiator. A network of fully-sealed pipes sends cold water to cool the backs of the servers – the side facing the hot aisles. This helps stabilise server temperatures and ensures the overall efficiency of the data centre’s cooling system by preventing the air coming off of the servers being too hot. And the best part: our water is cooled (almost) for free.

Low-cost cooling

One thing we have in abundance in the UK is cold air, so it makes sense to try and use it to cool a data centre. Free-air cooling systems work by bringing outside air into the data centre, expelling warm air, and not having to cool anything.

Of course, this has its own problems:

- Outside air is contaminated with dust, pollen, and whatever else is in your local environment

- It’s not always cold enough to do the job – so free-air cooling is much easier in the UK than it is in Dubai, and even the UK gets hot enough in the summer to cause problems

But with suitable local conditions and some clever engineering, using outside temperatures can be a significant energy saver without compromising the integrity of the data centre.

Here at Redcentric, we use an adiabatic cooling system, with the water in our cooling towers being cooled by outside conditions. This is one of the world’s most effective and energy-efficient cooling methods:

- It doesn’t require any energy input to cool, so no high energy costs

- The water from the cooling towers doesn’t enter the data centre, so no opportunity for contamination (heat exchangers use the water from the cooling towers to cool the clean water in the data centre)

It’s not entirely free – but it’s very inexpensive compared to conventional air conditioning systems and is a major part of what enabled us to achieve a PUE (Power Usage Efficiency) of just 1.12.

What happens next?

Adiabatic cooling and our rear door cooling system work extremely well for us – so much so we’ve won numerous green awards over the years. But we’re always keeping an eye on future developments, to see if we can achieve even greater efficiency.

Direct liquid cooling is considered one promising option in the evolution of computer cooling technologies. There are two main ways this can be incorporated in a data centre:

- Direct-to-chip cooling uses pipes to deliver coolant directly to a cold plate that is integrated into the server

- Immersion cooling is exactly what it sounds like – immersing the hardware (encased in a leak-proof container) into a non-conductive, non-flammable dielectric fluid.

These systems are efficient and clean, but they also rely on the server owners being on-board with the approach – and even adapting their hardware accordingly. Good for major companies operating their own data centres, perhaps, but not practical for a retail colocation provider at this stage.

Finding efficiencies

Cooling was long the bane of the data centre industry. Expensive, inefficient, yet essential. As the world’s reliance on digital technologies increases, and the compute-density of those technologies grows, we need to ensure as an industry we are doing all we can to reduce our environmental impact while delivering the services that our customers need. Today, cooling technology has come a long way and Redcentric are proud to have achieved a PUE below 1.2, and hope other data centres adopt similar eco-friendly technologies.

If you’re interested in hosting your IT with our award-winning cooling systems, check out our colocation services, any of our other services, or get in touch.